MDE#10 Vision Pro's Biofeedback Edge

A headset that learns from your data 🤖

⏳ 5 min read

I know…I know… I am lagging behind, haha. I think I am the last one to write about Apple’s new headset. Anyway, MDE is not a burning news newsletter, but rather a space of reflection around XR ethics and the metaverse ecosystem. So, articles here do not have the urgency of a news feed. 😉

Why talk about Vision Pro? Here, I don’t talk specifically about products, but this one deserves special attention. The release of Vision Pro is a statement for the XR and metaverse field: Apple is legitimizing XR as the next wave of computing—yeah, they call it spatial computing to get away from the metaverse and VR buzz, but we know it’s more of the same.

Its soon-to-be-released headset was well-known to the XR community for the past years. We knew that Apple was going to set the bar for excellence in the XR space. And as important, to make appealing the device to mainstream users so that XR reaches the consumer.

You will disagree with me now: $3,499 is that mainstream? Of course not. But this device is just the first of a series of Vision Pros that will become lighter, cheaper, and appealing to diverse budgets. Just envision a beautiful sleek Vision Air… This is just the start of mainstream adoption. ✨

Now let’s dig in!

Biofeedback. What is that?

According to Wikipedia, “Biofeedback is the process of gaining greater awareness of many physiological functions of one's own body by using electronic or other instruments, and with a goal of being able to manipulate the body's systems at will.”

For biofeedback to happen you need a sensor that measures data from your body and a system to process such data. Once the system has processed your bodily data, it can learn from it and program certain responses according to your body. This is the case with most wearables.

For example, Apple Watch has a Breathe App that detects your breathing rate via sensors on the watch and adjusts the training accordingly.

Biofeedback or “biological feedback” just means that the device is adapting to the information it’s receiving from your body.

Of course, there’s the dark side of the force too… Biofeedback is the most accurate manipulation tool. When a device gets to know about your body and physiology, you are more exposed than ever to apps that can manipulate you.

Biofeedback and Vision Pro

Vision Pro is an advanced headset that has accumulated more than 5000 patents over its more than 10 years of development.

What is fascinating is how biofeedback is integrated into the headset: anticipating the interaction from the user. This is pioneering in the field. 🚀

Besides using hand-gesture and voice-command controls to interact, the headset presents several eye-gazing patents that facilitate the seemingly “natural interactions” that make the device “just work”.

Disclaimer: I was not in the privileged pool of testers of the device. What I write here is after seeing the launch and some reviews from testers of the device.

So instead of testing the device, I dug into some of its neurotechnology patents.

Three very interesting patents were co-developed by researcher Sterling Crispin while at Apple. These patents help predict users’ behavior and adjust the mixed-reality visuals accordingly. Let’s check them!

Eye-gaze based biofeedback. Evaluates whether users are attentive to an experience to adjust the UI accordingly.

Biofeedback method of modulating digital content to invoke greater pupil radius response. Analyzes users’ pupil dilation to certain visual features. The system learns from users’ responses and then adjusts the content to enhance the experience for the user.

Utilization of luminance changes to determine user characteristics. Identifies whether users are paying attention, are distracted, or are even mind-wandering by analyzing the pupillary response to luminance changes in an experience.

In Sterling’s words: “There were a lot of tricks involved to make specific predictions possible, which the handful of patents I’m named on go into detail about. One of the coolest results involved predicting a user was going to click on something before they actually did. That was a ton of work and something I’m proud of. Your pupil reacts before you click in part because you expect something will happen after you click. So you can create biofeedback with a user's brain by monitoring their eye behavior, and redesigning the UI in real time to create more of this anticipatory pupil response. It’s a crude brain computer interface via the eyes, but very cool.”

So, we could even talk about BCIs (Brain-Computer Interfaces). It’s still early to talk about this type of technology for mainstream adoption and consumer uses—just witness the controversy of Neuralink’s human trials. However, Apple masterfully introduces physiological sensors in its headset pointing toward that direction. Biofeedback is the future.

So what can we do?

Well, that was more of an informative post.

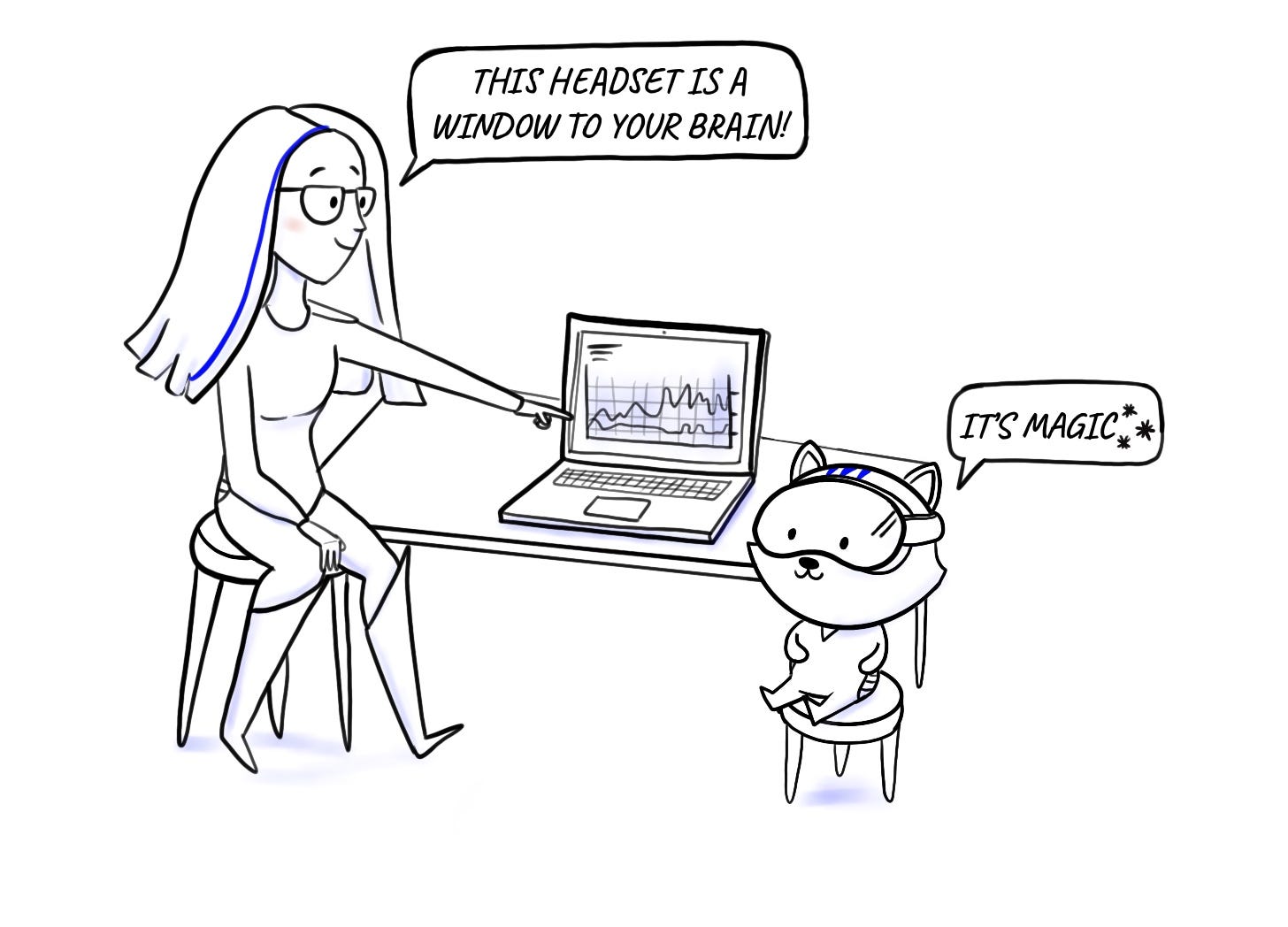

What matters is being aware of neurotechnology being embedded in advanced headsets to create the “magic” we’re all looking for.

It’s not magic, haha… it’s the headset reading the user ;-)

Now, you know.

Bye for today!

News of the past month: I literally packed up my life. My cycle in Denmark has come to an end and June was about piling stuff into boxes and coordinating the logistics for my international move. Where to? Paris! New adventures await me there. Can’t wait to share more soon 🎉

Thanks for reading until the end! I am looking forward to having you as part of this expanding community. Just click below 👇 to keep updated on the next ones.